In this post, I’ll make a simple(-ish) breakdown of digital certificates, how TLS works, and how certificate pinning might save your ass. Most importantly, I wanna mention how, in many mobile app use cases, Certificate Pinning most probably will not save your ass.

Certificate Pinning is an add-on on top of an already existing secure communication protocol (TLS/SSL). To understand how it plays into the flow, we’ll need an overview of those funky secure communication protocols.

A little bit of history first: Back in 1995 (when The Usual Suspects came out!), a few smart folks from Netscape got together and developed SSL: a secure communication protocol that uses public key infrastructure (PKI). This means that two dudes across the internet can talk to each other securely without someone spying on their communication. This was very forward-thinking and a great research enterprise for the rest of the internet.

Unfortunately, SSL (all versions) were one after the other found to be vulnerable, using outdated cryptography, and just downright bloated and hard-to-maintain. So, the internet came up with TLS. After a couple of iterations of TLS, things stabilized into a reasonably secure format (in 2018, with TLS 1.3), which is where we are at now.

TLS is a network protocol that supersedes SSL and uses a public key infrastructure to exchange information between the client and the server.

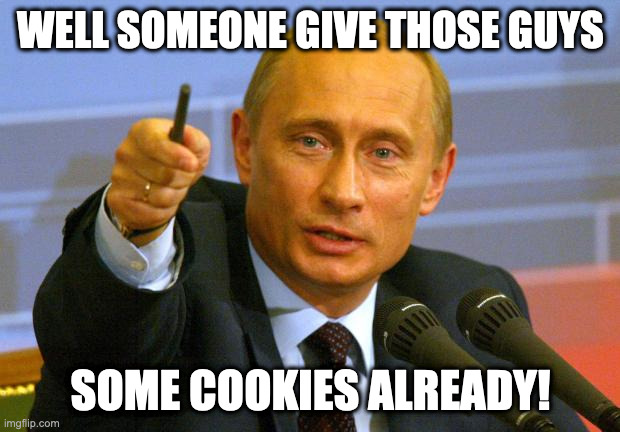

Let’s bring out our players: Alice (internet user), Bob (server owner) and Victor (our attacker) because this is a cryptography post and if we don’t mention Alice and/or Bob, a kitten will die.

Problem: Bob made a cool website for selling shower curtain rings: www.showerlove.com. Alice wants to browse Bob’s website. She needs a safe way to verify that A) the website she will visit is Bob’s website, and B) that Victor cannot sniff Alice’s traffic.

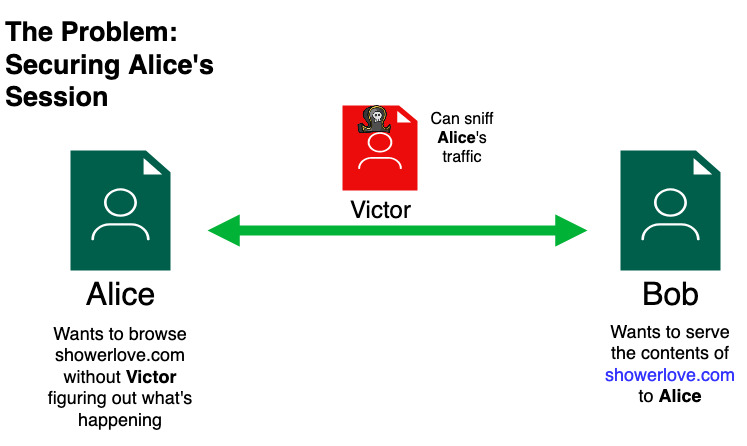

How can Bob declare to the internet that he is the owner of showerlove.com? An easy (although flawed) way of doing this is if we can delegate our trust to someone that both Alice and Bob would trust and would vouch for Bob, we can verify Bob’s identity. This is called a chain of trust:

1. Alice trusts GiantCorp

2. GiantCorp trusts Bob

3. therefore, Alice trusts BobSo, Bob can go to GiantCorp, a very reputable company that every internet user trusts, and ask them to sign a document that stipulates that Bob is the owner of showerlove.com and that if you browse to that website, you will get content the Bob has put out, not some imposter (like Victor).

What does GiantCorp do and how does Alice establish this chain of trust? Using Digital Certificates.

Let’s get back to our Chain of Trust hierarchy

1. Alice trusts GiantCorp

2. GiantCorp trusts Bob

3. therefore, Alice trusts Bob

Point #1 works by having the browser (Firefox, Chrome,

Safari, etc.) preload GiantCorp’s certificates in their codebase.

In technical terms, GiantCorp will be one of many

Certificate Authorities (or CA) on the internet. Since their

certificates are the original ones that everyone trusts, it’s called a

root certificate.

When Alice downloads a browser, she has all of those “pre-trusted” CA root certificates in the browser, without her needing to do anything more.

For point #2, Bob would need to do a couple of things:

Bob would first generate a key-pair: a public and a private key. Those

keys are specific to him alone. When we say

digital certificate, we’re referring to a file containing

either or both of those keys, plus a few dates and names.

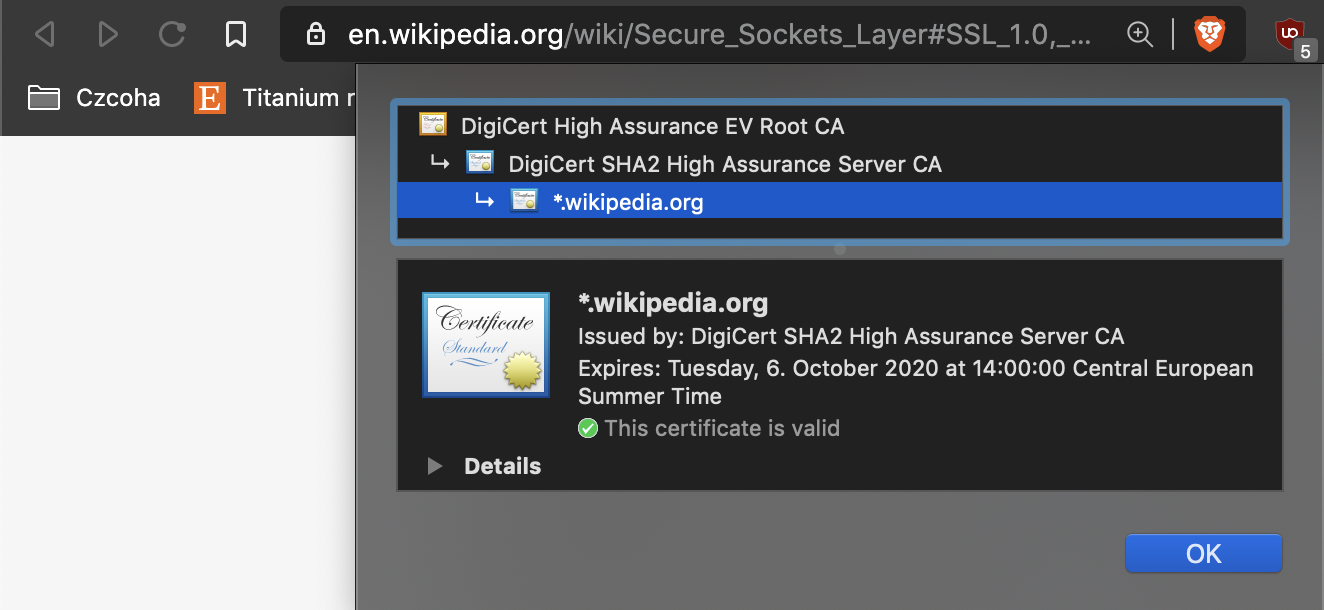

Bob now takes his beautiful certificate and pays a visit to GiantCorp. After GiantCorp deems him to be the rightful owner of showerlove.com, they would sign his certificate with their root certificate. The chain of trust will look like this:

| --> GiantCorp's root certificate

| ----> Some whatever intermediate certificate

| --------> Some other whatever intermediate certificate

| ------------> Bob's certificate

So now, Bob has a certificate, verified by GiantCorp \o/

Point #3 is now possible: following the chain of trust, Alice now trusts Bob.

So, a chain of trust is a way for A to trust C, given that both of them trust B.

TLS is a communication protocol that’s built on top of this concept and makes sure that the packets going in-between Alice and Bob are following this concept.

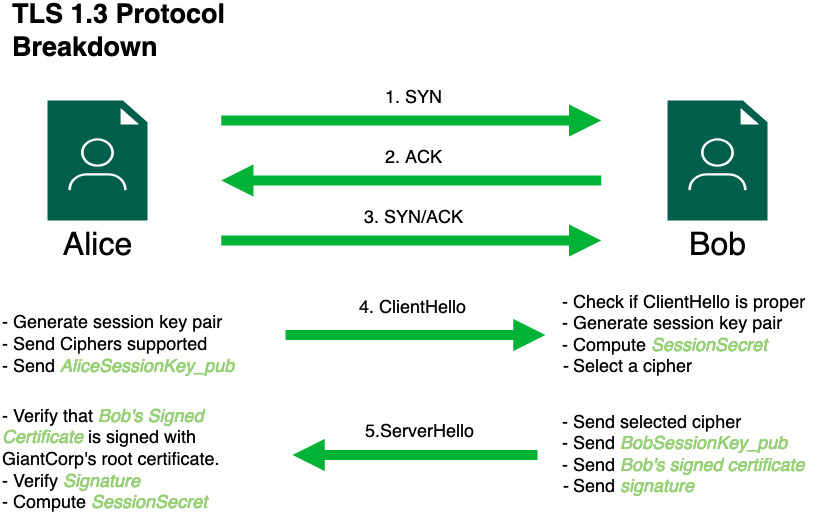

This diagram outlines how TLS 1.3 works. This is a hybrid of CloudFlare’s TLS handshake breakdown and Ausmasson’s Serious Cryptography book (TLS chapter).

Steps 1, 2, and 3 are how any TCP connection is established. We won’t be talking about here. Here’s a brief breakdown.

Step 4 is called ClientHello. In here, Alice

is telling the server:

Hi! I want to establish a TLS connection with you, Bob!. In

this step, Alice shares a couple of details:

AliceSessionKey_pub). The key-pair

that contains this was made specifically for this TLS session. We’ll

call the private key of this public key:

AliceSessionKey_priv

Bob will receive this ClientHello package, checks that it is

nicely formatted, and does a couple of things: * Calculate his own

key-pair for this session: BobSessionKey_pub and

BobSessionKey_priv * Calculate a

SessionSecret = DH(BobSessionKey_priv, AliceSessionKey_pub),

where DH is

Deffie-Hellman: a famous key exchange algorithm. This is a

great break-down.

In Step 5, Mr. Bob will now craft a response to Alice:

the ServerHello, which contains:

BobSessionKey_pubdigital certificate that we talked about

earlier, which has GiantCorp’s root certificate in its chain of trust.

This is the most important part of the entire protocol.

Without this part, this protocol is a really good way to talk to anyone

who says they are Bob, which might be Mr. Victor the Attacker or his

Grandmother.

ClientHello, this entire packet, and

Bob’s

digital certificate

Alice receives the ServerHello package and is now ready to do

the verification to check if Bob is who he says he is:

ServerHello.

SessionSecret = DH(BobSessionKey_pub, AliceSessionKey_priv). Notice how we are using the inverse of the session secret we used in

step 5. This is a magic property of Diffie-Hellman:

it allows two parties to calculate a common secret together without

ever sharing them over the wire.

SessionSecret to encrypt any future communications

together.

Now, Alice has cryptographic proof that she is viewing shower curtain rings in showerlove.com which are 100% Bob’s shower curtain rings /

The above method is very sound and credible, but we’re depending on this calculation on Alice having a clean collection of root certificates in her browser or mobile device.

Stop the next person you see. Tell them to name a couple of root certificate CAs that they have on their phone. If you get one person to respond to you, I’ll PayPal you my next paycheck. In reality, nobody really checks this or even bothers to note that most CAs are either crooks or idiots or both.

That being said, let’s think stupid a bit: Victor the Attacker can spend

several lifetimes learning about math and cryptography to finally break

TLS 1.3, OR they can just poison Alice’s root

certificates. If Victor the Attack somehow was able to poison this list

and inject his root certificate, maybe using a malware, an exploit or

downright asking the user to

please install it for him.

In Android, it’s as easy as getting one file to

/system/etc/security/cacerts/ I made a

small bash script

that takes care of this on a rooted device. For an Android exploit, it can

use a similar setup to inject a root certificate in the user’s device.

Afterward, Victor can impersonate Bob and serve Alice anything he wants,

which the Android system will be okay with. This is called a

Man-in-The-Middle

attack over TLS.

Now comes Certificate Pinning: a way to give the application a say in how this TLS protocol works: “hey. Bob told me that the public key of his certificate is XXYYZZ. I don’t want to accept communications from anyone else, even if they say they are Bob. M’kay”. In that way, if Victor was successful in poising Alice’s root certificate table, the application Alice is running will, depending on the implementation, be aware of this and react accordingly.

For a browser, like Chrome or Firefox, there was a very short-lived

feature called

HTTP Public Key Pinning (HPKP), which was doing something very similar to what we consider now

Certificate Pinning: It’s a header that a server would deliver to the

client and then the client would, after receiving the

ServerHello, check if the server’s public key matches the one

the client has.

The idea was pretty good. It gave folks who don’t trust HTTPS to do good a way to preemptively protect themselves. This policy was a can of worms in practice since if a server decides to change hosting providers or their CA, they would have a different set of public keys with no easy way to revoke the public key. The client can use the certificate revocation server to check this, but it was way too much overhead.

Furthermore, in practice, implementing this technique was not easy and if a server had a misconfiguration due to human error or malware, they could be shut-down from any previous user that visited them. This is why HPKP was deprecated by the Chrome team very shortly after its release.

Certificate Pinning is similar: its implementation is not easy: OWASP has a lengthy explanation on what to pin and how but if something goes wrong, this could have pretty bad results.

Putting the complexity argument aside: what’s the actual attack vector that this technique would protect against in mobile apps? Protecting user data in transit from a remote attacker. If you’re running a banking app, you should be using TLS 1.3 client-side and only having your server accept those as much as possible. Also, you should worry about downgrade attacks. This should cover you for 90% of cases.

If the user has a malware, exploit, or just downright accepted a rogue certificate to be installed on their device, that data could be compromised. If you’re worried about this scenario, then Certificate Pinning is good for you.

If, however, what you’re worried about is actually exposing proprietary

APIs, the actual parameters that get sent, or that you might be doing

something that the user shouldn’t know about, then Certificate Pinning is

an unnecessary overhead, namely because Victor the Attacker doesn’t need

to sniff traffic to figure any of those out: he can simply reverse the

app, disable Certificate Pinning, and continue with his job. There are

actually

more

posts on

disabling

certificate

pinning than

there

are on

using them correctly.

Heck, just by running

apktool d myapp.apk | r2 -zzz ./classes.jar | grep -i x509,

you can see if Certificate Pinning is enabled and exactly where.

If the goal of Certificate Pinning is to protect user data, in transit, from a potentially-compromised device, then it might be a good idea, if you can handle the overhead of managing certificates properly. If it’s to protect proprietary APIs or as an anti-reversing mechanism, it is not necessary.

Thank you for reading this post. I hope it was as useful for you as I had fun writing it :)

–

AJ